The treadmill, and the slop

A creator who runs a short-form channel is on a cadence treadmill. The platform algorithms reward consistency — three videos a week, every week, beats one video done perfectly — and the manual workflow doesn't survive that schedule. Script the voiceover. Source the B-roll. Hand-burn the captions. Mix the music. Format vertical. Two hours of mechanical labour per video, plus the editorial labour, plus the platform-specific export. The mechanical tail is the tail that breaks the cadence.

The generic AI clipping tools that exist today don't fix this in a way the creator can stand to use. They optimise for "engagement signals" — whatever pattern a recommendation algorithm rewards on average — and they flatten every creator who runs through them into the same algorithm-shaped paste. Same hook patterns. Same caption fonts. Same energy. The audience can detect it within the first two seconds, and the platforms have started detecting it too. The creator who survives the next platform rule change is the creator who hasn't traded their voice for a slightly higher retention curve.

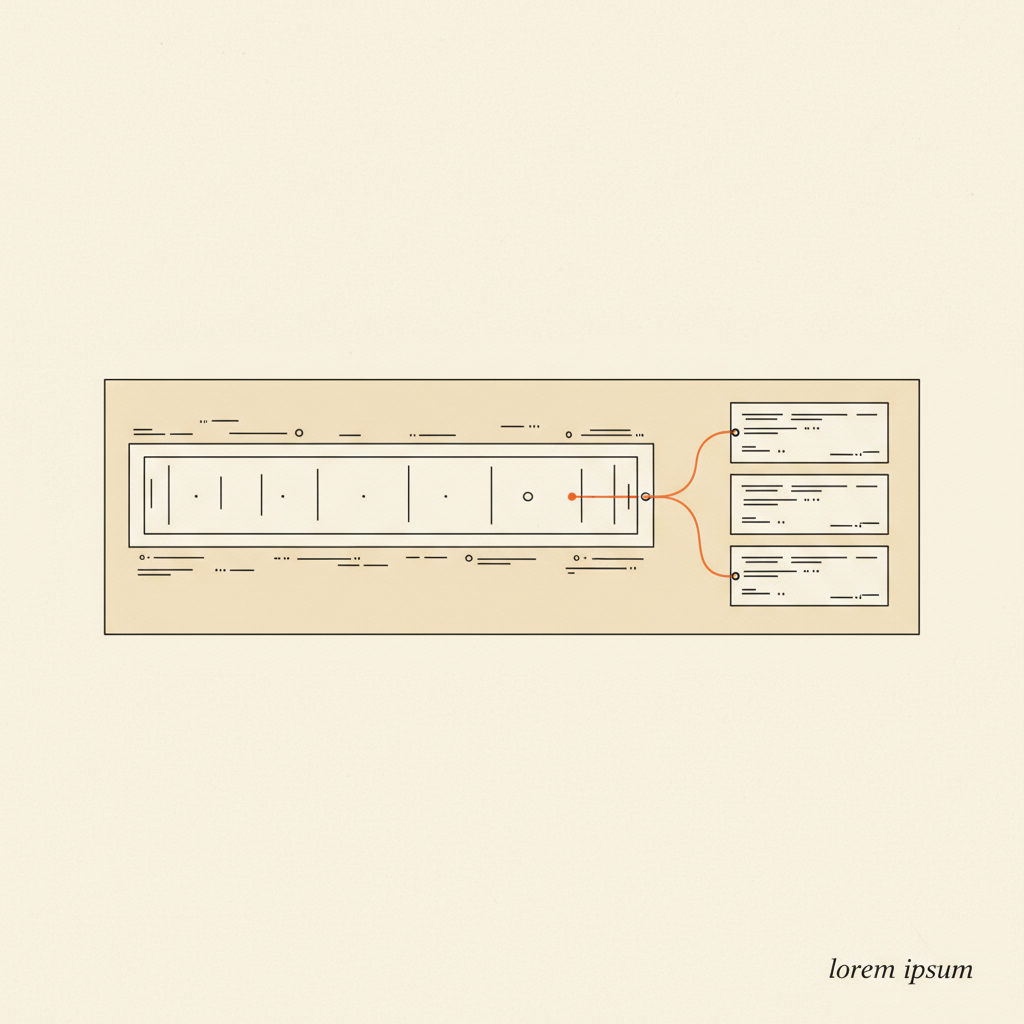

The pain, in order: time, then cadence, then creative dilution. The system is built for all three, with the third taken seriously enough to constrain the first two.